4.8 out of 5 based on 13913 reviews

4.8/5 from 13913 Reviews

Anyone reading this will be asking themselves that exact question. Before I put some research into this, I was asking that question myself, but allow me to dilute and clear some muddied water and bust some jargon of the biggest revolution since the industrial one, way back in the 18th century.

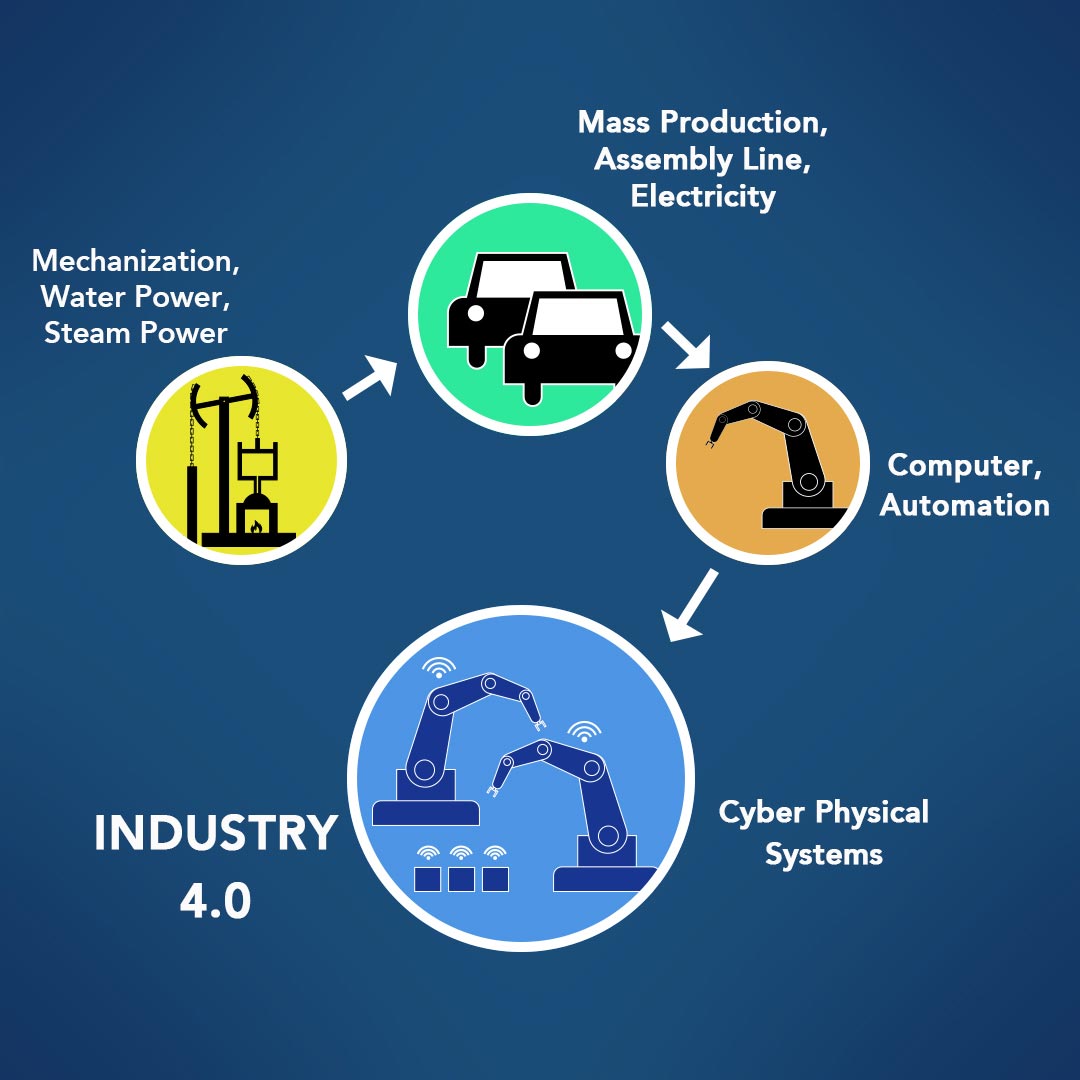

Industry 4.0 is the digital transformation of industry markets, with artificial intelligence and cyber physical systems leading the way, it can be quickly summarised by the image below:

The world that we live in is very digital and information driven. You are reading this on a phone, tablet, laptop or desktop computer (remember those?) and information is constantly being transferred to get what you want, in front of your eyes, in an instant.

The Internet of Things (IoT) has played a key role in our latest realisation that technology could make yet another advancement. The Internet of Things is the concept of connecting any device to the internet through Wi-Fi embedded in everyday objects, including your phone, fridge, car or home hub, and it has been key for manufacturers to link us all instantly through a new wave of connectivity through useful gadgets like smartwatches, and not-so-useful gadgets such as rectal thermometers. (Yes, you read that correctly.)

It is estimated that by 2020 there will be 26 billion connected devices, outnumbering humans nearly four to one.

Let’s go back to the beginning

The Industrial Revolution set the tone for how manufacturing processes could transition from the old to the new: we went from hand production and constant manual work to utilising steam, water power, and mechanization, which led to the rise of factories which now stick out on some skylines, mostly unused because of the later additions in the chain.

The revolution helped to raise the standard of living, with household incomes and populations witnessing sustained growth, and trade developments helped to add more fuel to the fire. Pun very much intended.

Now, just because standards of living were better does not mean that the working conditions were pristine. The Industrial Revolution, despite the positives of revenue and increased productivity, was littered with terrible working conditions that caused deformities, illness and death. Workers were made to work fourteen to sixteen hour days on incredibly low wages because, hey, who are you to complain? You have a job.

Children were a big part of work in the Industrial Revolution. Kids as young as five would be put into factory work as they were small, could fit into tighter spaces, a lot nimbler, and had small fingers that could get into hard to reach spots to apply oil to mechanisms or remove broken threads.

By the mid-18th century, Britain was at the forefront of the Industrial Revolution, utilising influence with colonies in North America, Africa, and some political sway on the Indian subcontinent. The textile industry was one of the major industries to benefit from the revolution, figures showed the British textile industry used 52 million pounds of cotton in 1800, this number more than decupled by 1850, reaching a consumption of 588 million pounds.

The factory system became a characteristic of the Industrial Revolution and many areas in the UK became known for their factory dominated areas, including Stoke, Derby and Manchester.

Electricity sparks a change

The first Industrial Revolution ended by the mid-1800s, and by around 1870 advancements in manufacturing had been put down to production technology which adopted systems that were already in place, just on a much bigger scale.

The era was characterised by the invention of the Bessemer Process by Sir Henry Bessemer. The process allowed the mass-production of steel and decreasing labour requirements, which I’m sure would have alleviated the pressure from the millions of proletariats.

This time proved fruitful for businesses and consumers alike as production costs fell along with prices, allowing living standards to improve dramatically. The years from 1870 to 1890 saw one of the greatest increases in economic growth in history for a twenty-year period.

Electricity became a massive feature of this time, which laid the foundation for this new age industrial phenomenon. Electrification allowed the assembly line and mass production of Industry 2.0 to become a reality, also being named the most important engineering achievement of the 20th century by the National Academy of Engineering in America.

Britain led the way in this revolution as Sir Joseph Swan invented the first light bulb. The Savoy Theatre in Westminster, London, became the first public building in the world to be lit entirely by electricity, and Mosley Street in Newcastle was the first street in the world to be lit by electrical street lighting. The world’s first power station was built in Deptford, supplying central London with energy through high-voltage AC power, providing the foundations for transformers which would go on to light streets around the country, and then the world.

The increase in productivity due to mechanisation and electricity meant a rise in unemployment as people struggled to find jobs which had been replaced by machines.

Computers and automation

1946 heralded a lot of important events in world history: the United Nations held their first meeting, Harry Truman set up the Central Intelligence Agency (CIA), Clement Attlee agreed with India’s right to independence, and the first computer ENIAC was completed at the University of Pennsylvania. It occupied 1,800 square feet, unthinkable in the modern day where we have computers which take up less space than a human torso. Data centres take up 1,800 square feet nowadays!

Ford were the first major company to establish an automation department in 1947, which followed the introduction of feedback controllers in the 1930s. Automation showed many benefits including increased productivity, labour savings, material costs, and quality improvement; but again, the problem arose of people losing out on jobs due to increased automation in the workplace. But, did businesses mind if they were saving money and getting a better return on investment? It’s a categorical nope.

These developments also lead to further questions regarding computers and automation:

All valid questions which are now major talking points today, and usually make or break the implementation of an automated system, but all have suitable solutions. But, the faster production rate, cheaper labour costs, and ability to replace hard, physical work and reduce the number of occupational injuries or tasks in dangerous environments meant that automation soon led the manufacturing charge into the technological age.

Manufacturing was the biggest industry to benefit from the changes that computers and automation brought to the table, something which became even clearer as technology continued to become more sophisticated. For example: car companies that were mass producing makes and models of their latest product could implement features including automatic windows and cruise control using feedback mechanisms.

The Present Day

Today, we see Industry 4.0 leading the charge for industrial transformation in manufacturing, and its adoption in other industries. It has also been referred to as Industrie 4.0 in academic, government and industrial collaborations. Industry 4.0 is characterised (but not limited to) the Internet of Things and is the result of 50-60 years of technological advancements.

Everything now is available “on the cloud”.

It’s not a real cloud, that’s just a term to describe ubiquitous, ever-present access to a network, or the Internet [Grandad, that explanation was for you].

Artificial intelligence (AI) rides shotgun in the Industry 4.0 carpool as it has helped to develop the intelligence demonstrated by machines, getting to a level now where it is capable of thinking for itself without a human needing to input the relevant programming to achieve a desired outcome. The first such historical instance of a computer and its intelligence was when an IBM® computer called Deep Blue® beat world chess champion Garry Kasparov after a six-game match which lasted several days, all the way back in 1997.

Industry 4.0 builds on the digital revolution and has explored ways that technology can break into new grounds, becoming embedded in objects, societies and the human body in ways we could only have imagined before, like self-driving cars.

Computer science and cyber security will play a crucial role, more so than ever before, in ensuring that these cyber physical systems continue to provide the features that we reap the benefits from.

Industry 4.0 is the latest stage in many organisations' lifecycles that helps them to use data, analytics, and artificial intelligence to utilise ground-breaking technology to deliver personalisation and instant gratification to a world of consumers who have grown accustom to receiving everything on-demand.

Whether you are looking to develop the next ground-breaking application or piece of software that contributes to industry 4.0, or builds on the Industrial Internet of Things, you would like to start managing projects that deliver these applications, or protect them from cyber threats, e-Careers offers digital certifications that are leading the next generation of the revolution.

Call us on +44 (0) 20 3198 7700 to speak to one of our Career Consultants today, to discuss your career goals or get started.